HashiCorp Vault on Exoscale Scalable Kubernetes Service (SKS)

The advent of multi-cloud environments with a variety of “Kubernetes as a service” offerings has spurred the deployment of applications in the cloud. In the cloud operating model, HashiCorp Vault provides security teams a baseline for identity based secrets management and encryption procedures. Due to the confidential nature of the secret data, Vault is commonly deployed on isolated infrastructure, ideally on bare metal, for the purpose of isolating the credentials as good as possible.

However, we observe that day2 operations of the Vault platform itself can be accomplished faster and more reliably on Kubernetes (e.g., routine maintenance tasks or frequent upgrades). In that sense, Vault as an application itself can also benefit from the recent advances in cloud native deployment and maintenance patterns. Moreover, HashiCorp supports these latest deployment practices on Kubernetes clusters with some considerations pertinent to most Kubernetes offerings.

This article with the accompanying example code demonstrates how HashiCorp Vault Enterprise can be deployed on a dedicate Exoscale Scalable Kubernetes Service (SKS) cluster. The deployment in Swiss datacenters can be recommended for a secrets management solution which focuses on the implementation of high cloud security standards without compromising the advantages of the recent advances in cloud native deployment methodologies.

Check out the official Vault on Kubernetes Deployment Guide, especially the contained “Production Deployment Checklist” before starting any (productive) deployments.

Kubernetes Cluster Deployment with Terraform Module

The Exoscale SKS Terraform module of Camptocamp already provides us with an excellent basis for bootstrapping a small Kubernetes cluster. Head over to the excellent Exoscale blog post to find out more about the invocation of the module.

For demonstration purposes we decided to improve that module invocation with following best-practices:

- Store and secure the Terraform state for the cluster in GitLab Terraform state backend

- Use a GitLab pipeline which allows to run the Terraform module in a secure environment and perfectly integrates the Terraform plan and output in GitLab merge requests

As an input, the pipeline expects the Exoscale API credentials (CI/CD variables). The module relies on the exo CLI utility to retrieve the Kubeconfig. The example pipeline ensures the availability of the binary in each job. The binary is downloaded in the first job. Subsequent jobs copy the binary from the cache.

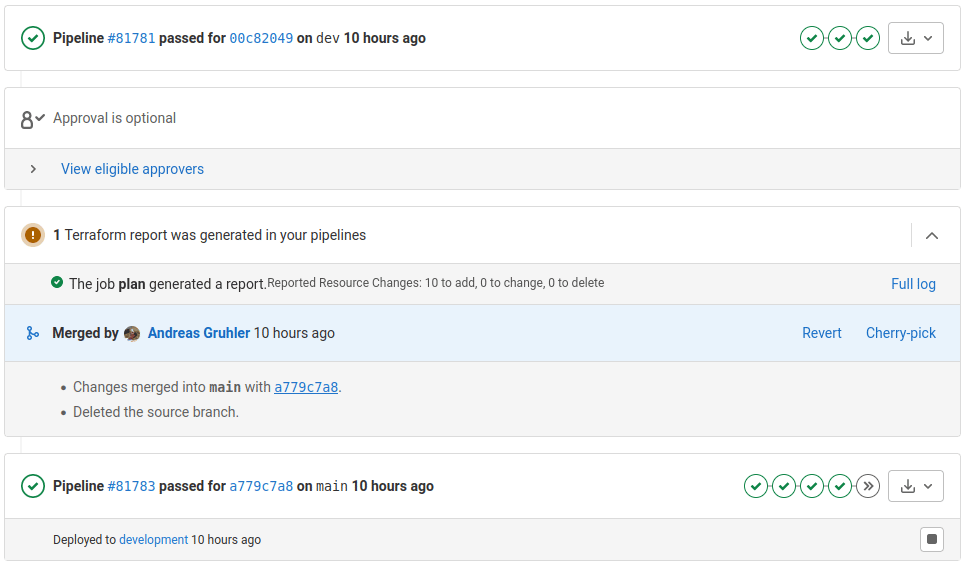

Finally, the pipeline outputs the sensitive Kubeconfig and a Terraform report artifact. The GitLab widget interprets this artifact by presenting the number of added/changed or new Terraform resource in the merge request UI.

⚠️ Make sure you understand how to restrict privileges on the Terraform state backend, the CI/CD pipeline input variables and the sensitive Kubeconfig output artifacts:

Note that you should plan with several SKS environments or test stages for production environments.

Deploy Argo CD on Kubernetes

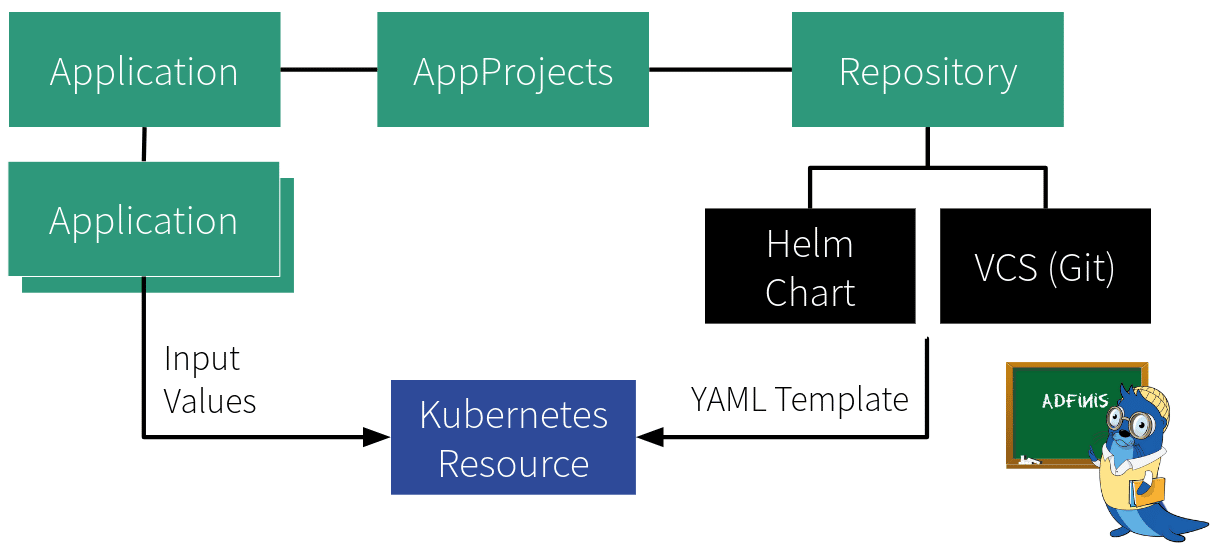

Argo CD allows us to define the state of resource in a Kubernetes cluster in a declarative and version-controlled manner. The state of each resources is described through Helm charts and YAML manifests that can be enriched with specific traits when instantiating a specific Application. The state of the resource in the cluster is continuously compared against the desired state in Git to detect any configuration drift.

The Argo CD AppProject defines the Application scope:

- Type of Kubernetes resources that can be created

- Kubernetes cluster and target namespace

- Allowed repository sources

Leveraging the App of Apps Pattern

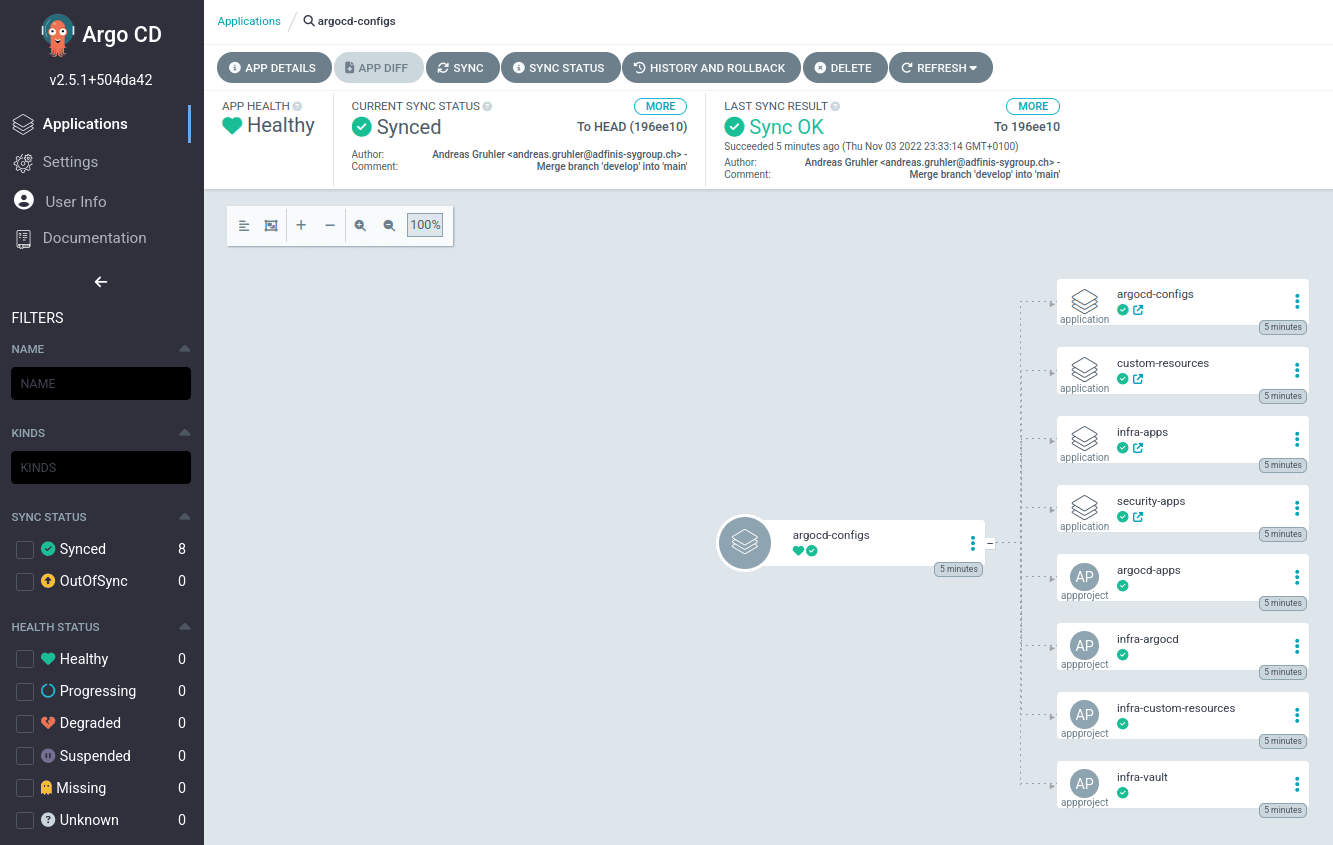

Even though an Argo CD instance can continously compare and sync the current cluster state with the state defined in Git, the initial AppProject and Application need to be created manually (Argo CD bootstrapping).

After these initial configuration steps, the first Application is used as an abstract container to keep all configuration objects in sync. This is called the “App of Apps Pattern”, where the first Argo CD app consists only of other apps.

When deploying Argo CD as an “unmanaged” service on a Kubernetes cluster, one Argo CD Application can also be reserved to recursively manage the state of the Argo CD deployment itself (cyclic dependency).

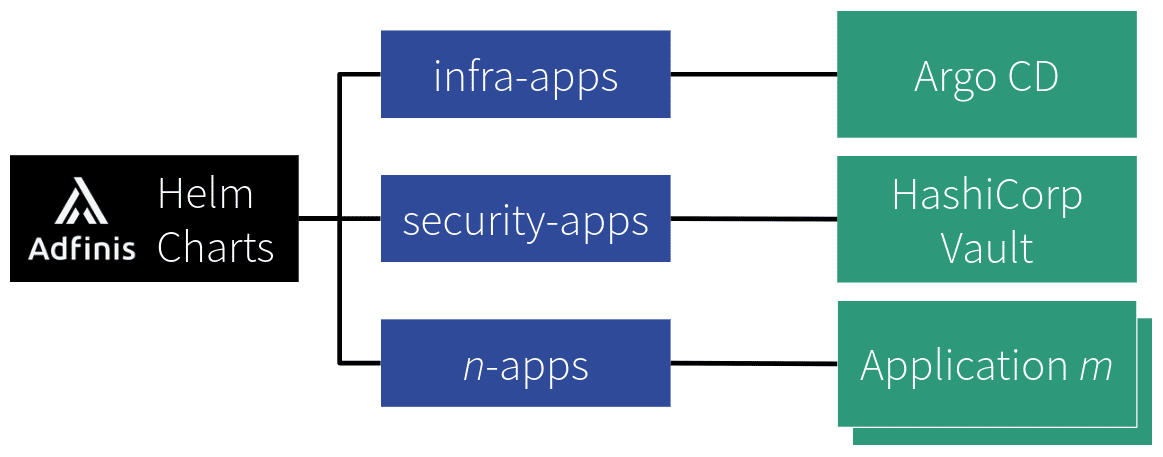

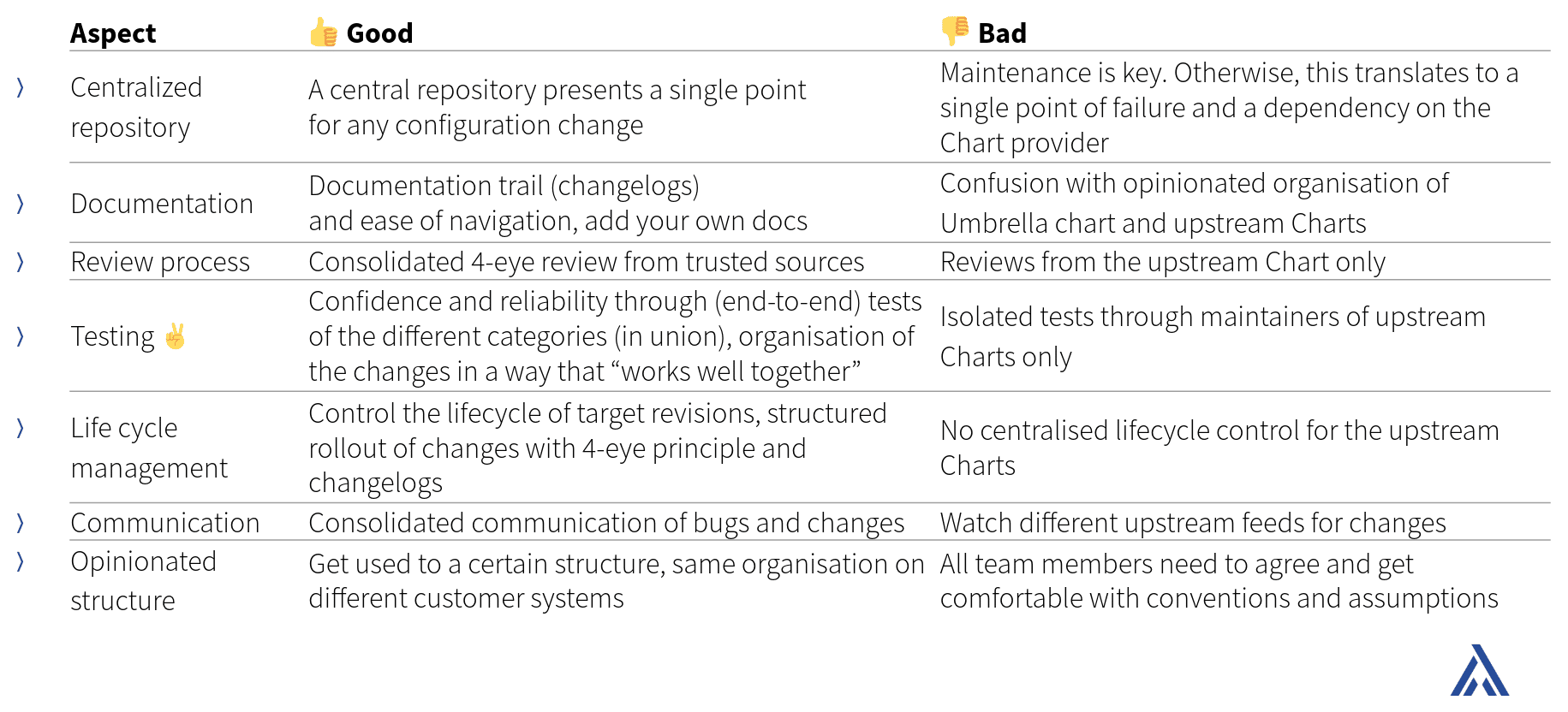

The App of Apps approach is especially useful to categorise and structure applications in a way such that they “work well together”. At Adfinis we maintain an opinionated version of this categorisation in Applications with “umbrella charts”, where each Application chart may have multiple subcharts, which represent the actual target Application.

This has shown to be a very useful pattern to focus the maintenance efforts in a central location. As maintainers of several applications in multiple clusters, it allows us to document and control the lifecycle and target revisions of downstream consumers (i.e., Argo CD instances on customers premises).

HashiCorp Vault Enterprise on SKS

With the above concepts in mind, we can deploy HashiCorp Vault Enterprise on SKS using the security-apps App provided by Adfinis.

There are a few common pitfalls in a Vault Enterprise deployment:

- Enterprise Vault clusters require a highly available storage backend such as Consul or Raft. For instance, the Vault Enterprise Docker image fails to boot with the file storage backend. We typically recommend Raft integrated storage for any project, because that is already integrated with HashiCorp Vault and provides the ability to easily create automated snapshot schedules without third-party agents.

- With the Raft backend, the API address of Vault needs to match the contents of the TLS certificate (and vice-versa). From our experience, a wrongly configured certificate or API address often leads to TLS certificate verification issues when new nodes join the cluster, while working with advanced features like plugins or during the DR replication setup.

- The initial autoloading process must be presented a valid HashiCorp Vault Enterprise license. Vault Enterprise does not start without license.

Vault is an excellent solution to manage short-lived and dynamic credentials. The example code demonstrates this capability by including the secrets engine plugin for Exoscale. This plugin dynamically creates short-lived API credentials for authentication against the Exoscale API.

Once installed and configured properly, HashiCorp Vault Enterprise opens many more possibilities to strengthen and secure modern cloud infrastructures. In case of any questions, please let us know. The Adfinis team is happy to support your business on the journey to establish and maintain modern secrets management practices.

Limitations of the Example Code

The example code is provided without any guarantee or warranty and should not be applied in productive environments. Specifically, this demo has the following limitations (there might be more):

- No auto-unsealing

- No persistent data storage and no audit logs

- No Ingress, Vault API is not exposed outside of this Kubernetes cluster

- No identity management and Vault policy

- Self-signed TLS certificates

If you decide start on your own journey with HashiCorp Vault Enterprise or the secrets management topic in general, we are interested to hear about your specific requirements and are eager to find out how we can help you to improve your security posture.